Google launches AI tools for mental health research and treatment

Field guide and multi-year investment target anxiety, depression, and psychosis interventions worldwide.

Google announced two new artificial intelligence initiatives on July 7, 2025, designed to support mental health organizations in scaling evidence-based interventions and advancing research into anxiety, depression, and psychosis treatments.

The first initiative involves a comprehensive field guide developed in partnership with Grand Challenges Canada and McKinsey Health Institute. According to the announcement from Dr. Megan Jones Bell, Clinical Director for Consumer and Mental Health at Google, "This guide offers foundational concepts, use cases and considerations for using AI responsibly in mental health treatment, including for enhancing clinician training, personalizing support, streamlining workflows and improving data collection."

The field guide addresses the global shortage of mental health providers, particularly in low- and middle-income countries. According to analysis from the McKinsey Health Institute cited in the document, "closing this gap could result in more years of life for people around the world, as well as significant economic gains."

Subscribe the PPC Land newsletter ✉️ for similar stories like this one. Receive the news every day in your inbox. Free of ads. 10 USD per year.

Summary

Who: Google for Health, Google DeepMind, Grand Challenges Canada, McKinsey Health Institute, and Wellcome Trust, targeting mental health organizations and task-sharing programs globally.

What: Two AI initiatives including a practical field guide for scaling mental health interventions and a multi-year research investment for developing new treatments for anxiety, depression, and psychosis.

When: Announced July 7, 2025, with ongoing development and research partnerships extending multiple years.

Where: Global implementation with focus on low- and middle-income countries where mental health provider shortages are most acute.

Why: Address the global shortage of mental health providers and democratize access to quality, evidence-based mental health support through AI-powered scaling solutions and advanced research.

Subscribe the PPC Land newsletter ✉️ for similar stories like this one. Receive the news every day in your inbox. Free of ads. 10 USD per year.

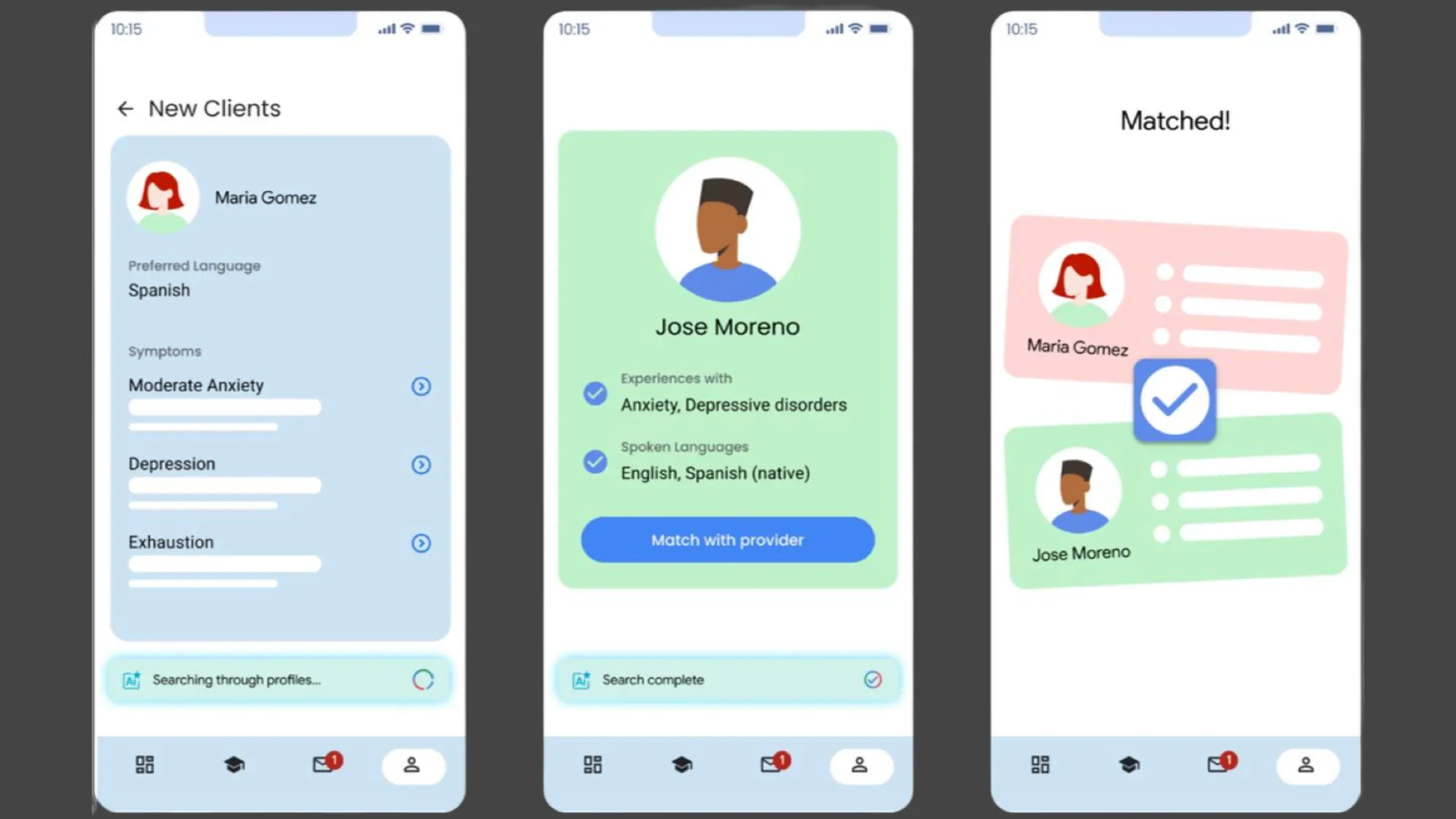

The 73-page guide outlines nine specific AI use cases for mental health task-sharing programs, including applicant screening tools, adaptive training interfaces, real-time guidance companions, and provider-client matching systems. These tools aim to address challenges such as supervisor shortages, inconsistent feedback, and protocol drift that limit the effectiveness of current mental health programs.

Task-sharing models allow trained non-mental health professionals to deliver evidence-based mental health services, expanding access in underserved communities. The guide demonstrates how AI can standardize training, reduce administrative burdens, and maintain quality while scaling these programs.

According to the field guide documentation, "By standardizing training and avoiding the need for a human to be involved at every phase of the process, AI can help mental health task-sharing programs effectively scale evidence-based interventions throughout communities, maintaining a high standard of psychological support."

The second initiative represents a multi-year investment from Google for Health and Google DeepMind in partnership with Wellcome Trust. The funding, which includes research grant funding from the Wellcome Trust, will support research projects developing more precise, objective, and personalized measurement methods for anxiety, depression, and psychosis conditions.

The research partnership aims to explore new therapeutic interventions, potentially including novel medications. This represents an expansion beyond current AI applications into fundamental research for mental health treatment development.

The field guide acknowledges that "the application of AI in task-sharing models is new and only a few pilots have been conducted." Many of the outlined use cases remain theoretical and require real-world validation across different cultural contexts and healthcare systems.

For the marketing community, these developments signal growing regulatory attention to AI applications in healthcare advertising. Recent California guidance on AI healthcare supervision and Google's new certification requirements for pharmaceutical advertising demonstrate increased scrutiny of AI-powered health technologies.

The field guide emphasizes the importance of regulatory compliance for AI mental health tools. Several proposed use cases, including triage facilitators and provider-client matching systems, could face classification as medical devices requiring regulatory oversight from authorities like the FDA or EU Medical Device Regulation.

Organizations considering these AI tools must evaluate technical infrastructure requirements, including cloud versus edge computing approaches, data privacy compliance, and integration with existing healthcare systems. The guide recommends starting with pilot programs and establishing governance committees before full-scale implementation.

Technical implementation challenges include model selection between proprietary and open-source systems, data preparation costs ranging from $10,000 to $90,000, and ongoing maintenance expenses of 10 to 30 percent of initial development costs annually.

The initiatives build on growing evidence that task-sharing approaches can improve clinical outcomes while reducing costs. Research cited in the guide shows that mental health task-sharing programs are cost-effective and can increase the number of people treated while reducing mental health symptoms, particularly in low-resource settings.

Real-world implementations highlighted in the guide include The Trevor Project's AI-powered crisis counselor training bot, which trained more than 1,000 crisis counselors in approximately one year, and Partnership to End Addiction's embedded AI simulations for peer coach training.

These organizations report improved training efficiency and enhanced quality of coach conversations through AI implementation, suggesting practical benefits for established mental health programs.

The field guide warns that successful AI adoption requires comprehensive planning across technical, ethical, governance, and sustainability dimensions. Organizations must establish clear policies for responsible AI use, conduct risk assessments, and maintain human oversight throughout implementation.

According to the World Health Organization principles referenced in the guide, responsible AI in healthcare must protect autonomy, promote human well-being, ensure transparency, foster responsibility and accountability, ensure inclusiveness, and promote responsive and sustainable development.

Subscribe the PPC Land newsletter ✉️ for similar stories like this one. Receive the news every day in your inbox. Free of ads. 10 USD per year.

Timeline

- July 7, 2025: Google announces two AI initiatives for mental health research and treatment

- January 2025: California issues guidance requiring physician supervision of healthcare AI systems

- May 2024: FDA reports 981 AI and machine learning software devices authorized for medical use

- Development ongoing: Field guide created through 10+ discovery interviews, expert summit with 20+ specialists, 5+ real-life case studies, and review of 100+ peer-reviewed articles